Prompt debt: what happens after you build the library

Prompting is usually the first skill people build when they start learning AI, and unlike a lot of skills, it doesn't stay learned. The models change, the best practice shifts, and what worked well six months ago may now be producing results that are wildly off, without anyone quite knowing why.

What is prompt debt and why does it matter?

With every team I work with, prompting practice sits somewhere on a spectrum. Some teams have a library and use it consistently, while others have one that individuals have quietly adapted to their own needs, so the shared resource and the actual practice have diverged. And then some teams have no shared prompting practice at all; people do whatever works for them and none of it gets captured or widely shared. What's interesting about all three situations is that prompt debt accumulates regardless. The difference is just how visible it is.

Prompt debt borrows from software development's concept of technical debt: the accumulated cost of shortcuts, unmaintained code, and decisions that made sense at the time but haven't aged well. This concept translates directly to prompt libraries. Prompts written twelve months ago may have been built around techniques that have since been formally downgraded. Sander Schulhoff's research, co-authored with teams at OpenAI, Google, and Stanford, found that role-based prompting, the "act as an expert copywriter" instruction that most of us have used, shows statistically insignificant accuracy improvement on analytical tasks. It still works well for expressive and stylistic tasks, which is most of what creative teams are doing, but the nuance matters. If your library was built on the assumption that persona framing is always the right move, parts of it are now working harder than they need to.

Why does a prompt library stop working?

Model behaviour has also shifted. A prompt written for GPT-4 in early 2024 behaves differently on GPT-5, Claude Opus, or whatever your team is using today. Register, structure, tone, level of inference all vary by model and by model version. Teams managing multi-model workflows face an operational problem with very little practical guidance available.

Creative prompts also degrade in ways that enterprise governance frameworks tend to miss, because creative prompts are tied to things that move. Brand voice evolves, visual direction shifts, and a prompt that captured a client's tone accurately eighteen months ago may now be producing work that feels slightly off-register. If nobody owns the prompt library, nobody is checking whether it still reflects where the brief is today.

That question of ownership is where the published discourse goes quiet for creative teams. In enterprise contexts, CIO.com described prompts as "unowned infrastructure" earlier this year, and the observation is accurate. In tech and product teams, governance frameworks are starting to assign prompt ownership to specific functions. In creative agencies, production houses, and in-house teams, that conversation hasn't started. The closest thing most agencies have is an informal understanding that the person who built the library is responsible for it, which works until they leave or get busy, and then it quietly doesn't.

How do you audit a prompt library?

An audit doesn't have to be a large exercise. For most creative teams it comes down to four questions:

Which prompts are still in active use? Easier to assess than it sounds. If your library lives in a shared doc or Notion page, check the view or edit history. Prompts nobody has opened in three months are probably not in active use, or people have saved their own version somewhere else entirely, which is its own finding.

Which have been modified beyond recognition? Ask people to share the prompt they actually used for a recent piece of work and compare it to the library version. If they've added three lines of context, changed the tone instruction, and removed the format requirement, the library version is effectively a first draft that nobody is using. The individual adaptation is not the problem; the gap between that and the shared resource is.

Which were built for a model or technique that has since moved on? This requires knowing roughly when each prompt was written and which model it was written for. Prompts built around rigid persona framing in 2024 or early 2025 were written when that scaffolding was considered best practice. It still works for stylistic tasks, but if your library is full of it without distinction, you're applying a blunt tool where a lighter touch now does the same job.

Which have no identifiable owner or use case? This is the one that catches people off guard. These are the prompts that exist in the library with no context about what they were for, who wrote them, or which client or project they were built around. They look useful. Nobody quite knows if they are. And because nobody owns them, nobody feels responsible for updating or removing them. Start here.

That last category is usually larger than expected, and it is where the debt lives.

The structured frameworks, CO-STAR, RACE, RASCEF and the rest, remain a practical starting point for standardisation, particularly for copy, briefs, concept development, and tonal consistency work.

What the research does suggest is that they work best as entry-level scaffolding rather than a complete methodology, as the conversation shifts toward context engineering: building brand guidelines, audience definitions, and style documents into the model as structured context, rather than rewriting them into individual prompts each time. That transition is something most creative teams have no sector-specific guidance for yet.

The prompts your team built in 2025 are worth a look. Not because they're broken, but because the tools and the practice have moved, and a library that served you well six months ago might be quietly killing your team's confidence in AI when it doesn't have to.

Not sure how AI is feeling with your team? Find out how SIGNALcan help you.

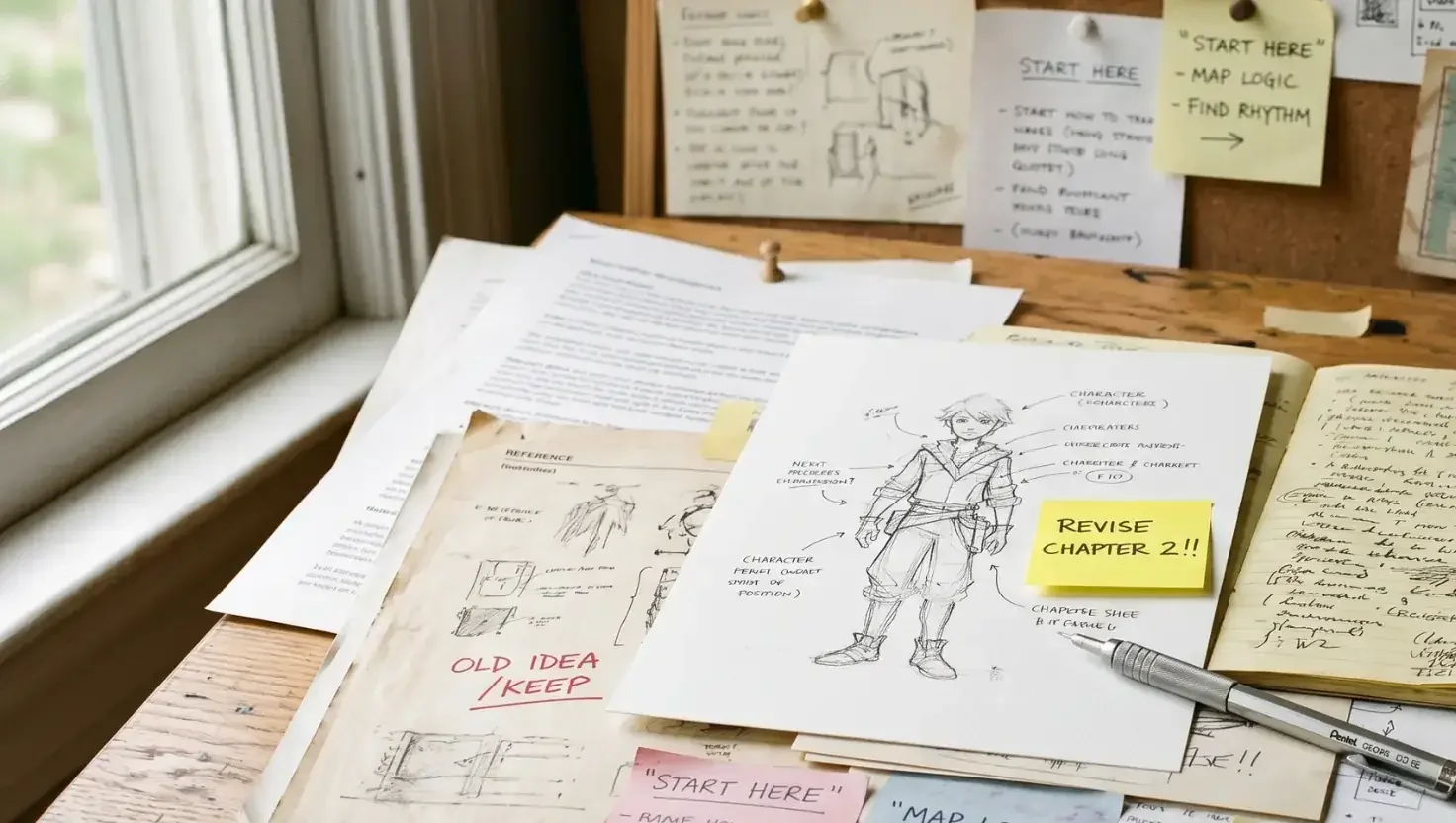

A note on how this was made: research for this article was conducted using Perplexity Deep Research, with academic and industry sources validated against published material. Gemini was used to generate the image (which you should be able to tell by the AI text issues). The content direction, framing, the audience, the editorial judgement, and every significant decision along the way was made by me.