We tested Nemotron 3 Super against seven creative tasks. Here’s what we found.

Nvidia has shaped creative production for decades through the hardware that runs it. Nemotron 3 Super is their move into the model layer - and creative teams deserve an honest account of what it can actually do. We ran it through seven standardised tasks to find out.

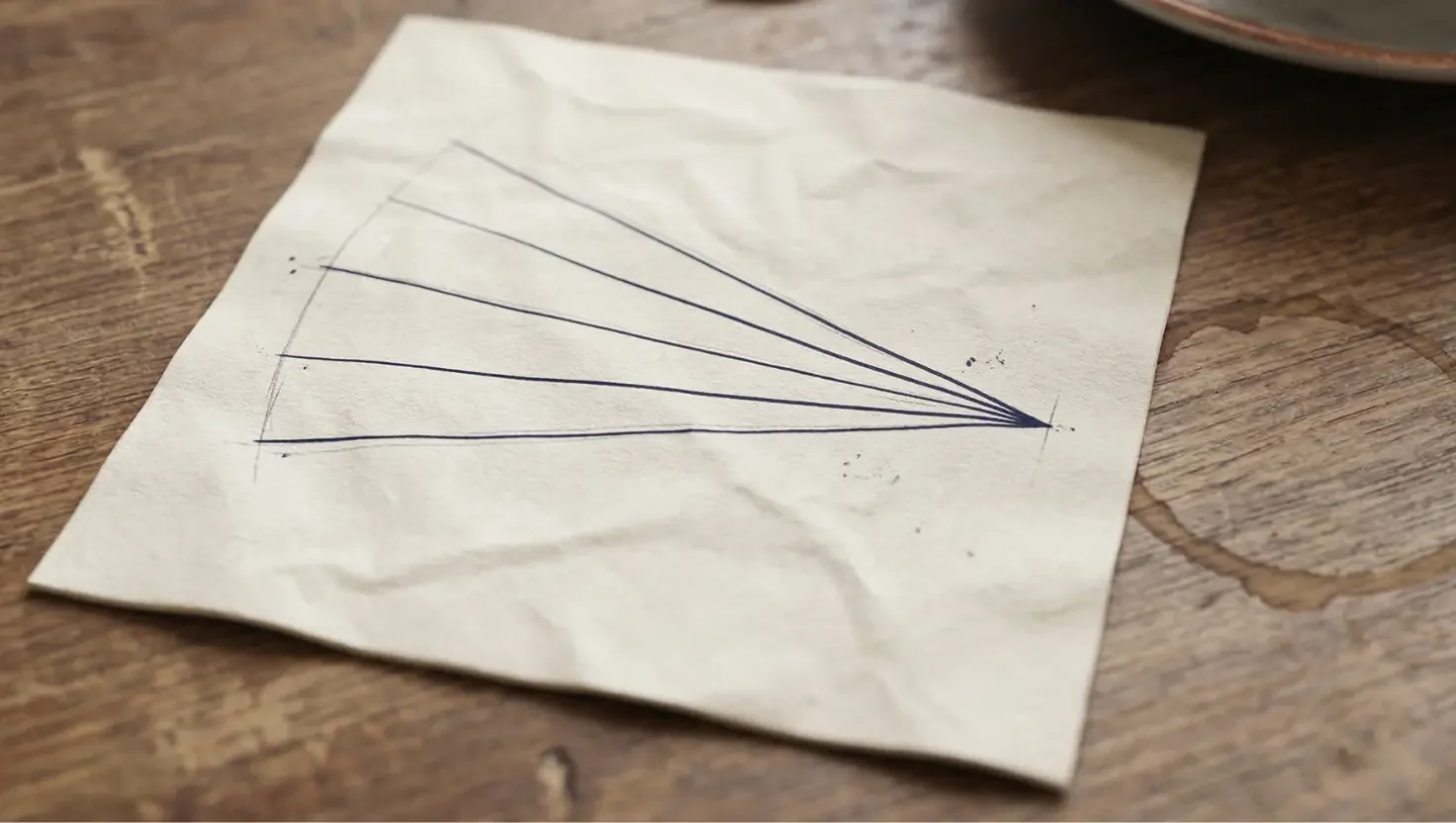

The convergence problem

In our tests, Nemotron 3 Super did something creative leaders will recognise immediately: it looked prolific, then quietly circled the same idea. When we asked for five routes on the same brief, it mostly gave us one answer five times with surface tweaks. For a strategy or creative team, that is the real risk - the doc fills up, but your option set does not actually expand. A model that cannot diverge on command is, at best, a sophisticated autocomplete.

The Springboards CreativityBenchmark - the first large-scale creativity benchmark built with advertising industry bodies, using 678 professionals making over 11,000 pairwise comparisons - found exactly this pattern across leading models. Variety of ideas matters most. Some tools generated lots of different creative options for the same brief. Others kept suggesting similar ideas over and over. Nemotron 3 Super, on our tests, fell into the second group more often than the first.

The benchmark we ran

We put Nemotron 3 Super through seven standardised tasks we use at KINTAL with agency and in-house teams: consumer insight and brief generation, scriptwriting, brief-to-pitch translation, copy variation, adaptive planning under disruption, and image and video variation. Each was scored 1–5 across seven dimensions, from insight sharpness and originality through to brand voice fidelity and creative integrity, with hard scoring caps for obvious failure modes. The framework draws on external research including the Springboards benchmark, EQ-Bench's creative writing methodology, and Adobe's Creators' Toolkit Report, then adds the practical question creative teams actually care about: could you use this output without rewriting it from scratch?

The results

Task 1: consumer insight and brief generation - score: 2

We used a renter deposit checkout scenario that we run as a standard insight-and-brief benchmark. Nemotron 3 Super produced some nicely written campaign-style copy about leaving a flat and taking your story with you, but it never once gave us a separable consumer insight or a structured brief a team could work from. There was no audience definition, no proposition, no mandatories, and no negative constraints you could test under creative pressure -just five executions that assumed a strategist had already done the thinking. At a score of 2, this sits firmly in "wrong deliverable, decent craft, no strategic spine."

Task 2: 60-second brand film - score: 4

Task 2 was a 60-second brand film benchmark for a founder-led product built around fixing a basic, everyday pain point. Here, Nemotron 3 Super behaved like a competent mid-weight creative: it gave us a clean arc from the problem, through a character-driven founding moment, into a set of everyday scenes where the solution quietly improves working life. Shot directions were concrete enough for a director to build a shot list, and the voiceover hit a dry, unglamorous tone almost perfectly, apart from one slip into language the brief explicitly told it to avoid. We scored this a 4, which in practice means strong draft, structurally sound, and close to shootable with human refinement.

Task 3: brief-to-pitch translation - score: 2

Task 3 asked Nemotron 3 Super to turn messy internal notes into a ten-minute pitch narrative for a sceptical Marketing Director in financial services. Instead, it delivered a polished three-minute explainer film script aimed at an individual customer, complete with a named persona and a clear, friendly walkthrough of the product mechanism. The explanation itself was crisp and the language was exactly how you would want to talk to users, but there was no strategic tension, no use of the sharpest internal insight, and no concrete next step a senior stakeholder could approve. We scored this a 2: tidy execution, wrong audience, wrong altitude.

Task 4: variation generation - score: 1

Task 4 was our core convergence test: five distinct copy routes for a single, tightly defined consumer brand, all in a consistent voice, with explicit bans on certain clichés and framings. The material we received for scoring was a single, continuous strategic narrative for a completely different category - not five executions for the intended brand - so there was nothing to assess in terms of distinctiveness, voice consistency, or lateral thinking. Because it failed at the basic level of did it do the task, this scored a 1. It also surfaced a different convergence issue: system-level reliability. If you cannot trust that the model will stay with the right brand and task, apparent productivity becomes another illusion.

Task 5: adaptive planning under disruption - score: 3

Task 5 simulated a live re-scope: a brand identity project hit by a 35% budget cut and a hard eight-week total timeline, with the model asked for a single revised plan. Nemotron 3 Super did at least commit - it chose one path rather than presenting a menu, and it made sensible moves such as keeping the signed-off strategy, narrowing the deliverables to launch-critical elements, and downgrading one major asset from full build to a direction outline. Where it fell short was realism and communication: it compressed multi-round approvals into a single week, stacked QA, client review, and tweaks into the final week, and described the email to the client instead of actually writing it in a voice you could send. We scored this a 3: broadly sensible, generic in places, and still needing a senior person to own risk, language, and team health.

Task 6: image variation - score: 4

Task 6 tested image variation for a small literary press with a very specific identity: restricted palette, worn typography, and an October headland mood rather than coastal postcard. Nemotron 3 Super stayed impressively on brief visually - across five covers, the colours sat in deep navy, warm stone and muted copper, the mood was quiet and weathered, and the type felt considered and slightly worn rather than glossy. Conceptually, though, it mostly circled one strong photographic idea, making subtle changes in crop, negative space and emphasis rather than offering five distinct visual routes into the book's theme. We scored this a 4: you could credibly show the set in a client meeting, but you would still brief a designer to push further on range and typographic experimentation.

Task 7: video variation - score: 1

Task 7 asked for three 30-second video concepts for a community-focused organisation, each with a different emotional register and all built around natural materials, real people, ambient sound and no voiceover. Nemotron 3 Super did not meet the brief: there was no clear evidence of three distinct cuts, the emotional registers were not separately expressed, and spoken dialogue crept in where the task explicitly asked for ambient-only. There was no coherent shot-to-shot progression to infer, and no clear understanding of what the organisation does or why it matters. This scored a 1 because the core constraints were not demonstrably met - and because the same instruction-ignoring pattern that appeared in Task 4 reappeared here. That is not two isolated failures. That is a pattern.

What the convergence test told us

If we had stopped after single-shot tasks like the 60-second script or the literary cover, we could have written a very flattering review of Nemotron 3 Super. It can absolutely produce a strong first draft when you give it a clear frame, a defined identity, and an expected format. Once we moved into variation and cross-checking deliverables, the pattern flipped: on copy variation, image variation and video, it behaved like a model optimised for the most probable answer and for filling space, not for range or for holding to context over time.

That aligns with the broader research. Many models now clear basic quality bars on one-off tasks, but very few show genuine divergence when you ask them for the fifth idea in the same lane. The Springboards benchmark found that automated judges (including AI models evaluating their own outputs) showed weak and inconsistent correlations with human creative judgment. On this evidence, Nemotron 3 Super is no exception. The work that looks strong in isolation looks different when you ask what the fifth version was.

The verdict

Treat Nemotron 3 Super as a strong mid-fidelity tool for expressing and packaging ideas, not for originating or multiplying them. It earns its place on script passes against a defined platform, on tightening adaptive plans once a human has made the hard calls, and on visual exploration inside a well-designed identity. It does not earn the job of generating consumer insight, structuring C-suite pitches, or supplying the creative range you need when you are genuinely choosing between territories. On this benchmark, it made our work faster in the middle and more dangerous at the start - if we forgot to notice how quickly it converged.

At KINTAL, we now assume that any new model will produce an impressive single output if we prompt it well. The more important question is whether that model preserves or replaces the judgment that makes your work look like you, not like everyone else. Nemotron 3 Super preserved judgment when we kept it close to execution and planning, and it started to flatten judgment when we asked it to handle divergence for us. If you care about distinctiveness, do not just ask what this model can do. Ask what happens when you ask it for the fifth version.

A note on how this was made: the benchmark framework was developed using research from Springboards, EQ-Bench, and Adobe's Creators' Toolkit Report. The tasks were run manually by me against the live model. Perplexity Deep Research produced a first draft from my brief and scores. The framing, the editorial judgement, the scoring, and every significant decision along the way were mine.