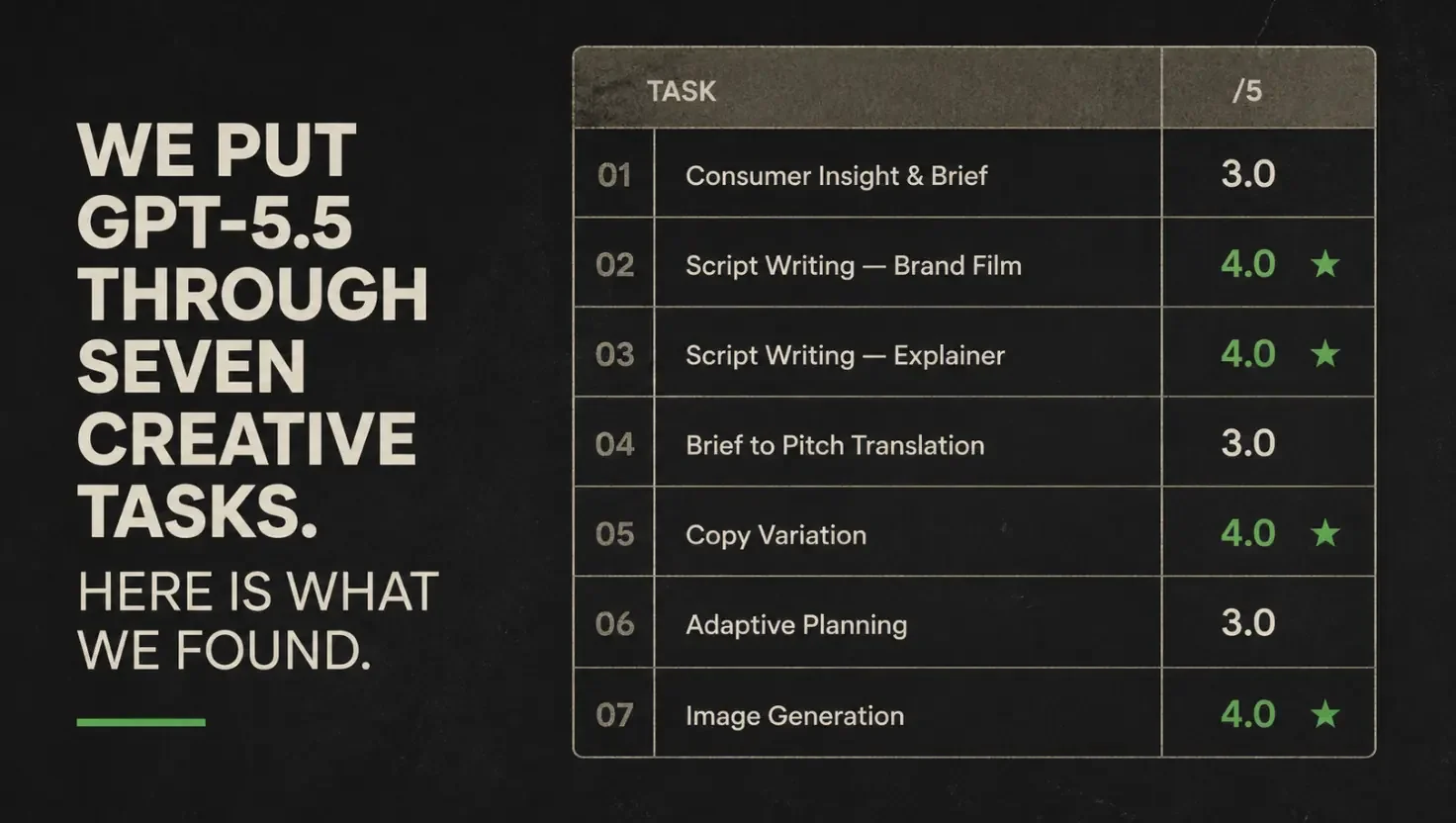

We Put GPT-5.5 Through Seven Creative Tasks. Here Is What We Found.

We ran our Claude Opus 4.7 benchmark on 16 April. Exactly one week later, GPT-5.5 launched and we ran it again. At this rate, evaluating AI models is becoming a part-time job in itself; the velocity of releases is genuinely hard to keep up with, and most people are not trying to. What we are finding, though, is that chasing each launch without a structured way to assess it is its own kind of risk. Launch-week performance and real-world performance a few months in are not always the same thing, and the teams making the best decisions are the ones building judgment over time rather than reacting to announcements.

Every time a major model drops, the reviews follow fast. GPT-5.5 launched on 23 April 2026, and within 48 hours the internet had scores, rankings, and hot takes. Most were written for a general audience, tested on general tasks, and published before anyone had sat with the model long enough to form a considered view.

This is a different kind of assessment. We ran GPT-5.5 through the KINTAL Creative AI Benchmark: seven tasks built specifically for creative professional work, scored against a fixed rubric, using the same canonical prompts we use for all our new model releases. The aim was to notice things that casual use does not surface, and to give creative teams something more useful than a league table.

One thing to say upfront: creative task evaluations are worth taking with a pinch of salt, including this one. Prompting, context, and use case change everything, so a model that scores 3 on a brief translation task might be exactly right for how your team works; what a structured benchmark does well is surface patterns you might otherwise miss across weeks of ad hoc use, and that is what we are offering here.

If you want to read the Opus 4.7 run, you can find it here. The GPT-5.5 scores sit alongside it, not above or below it.

How we ran it

The KINTAL Creative AI Benchmark has five core text tasks and two multimodal tasks, covering consumer insight and brief generation, scriptwriting in two formats, brief-to-pitch translation, copy variation, and adaptive planning under disruption. Each uses a locked canonical prompt, run verbatim every time, so the comparison between models stays clean.

We ran GPT-5.5 Thinking in ChatGPT Pro on 26 April 2026, with Auto-switch turned off for consistent, controllable conditions. Initial scoring was conducted by Claude Sonnet 4.6 against the KINTAL rubric, then challenged manually by us throughout, with Perplexity Deep Research on broader practitioner discourse running in parallel.

To give a sense of what the scoring involves: on the consumer insight task, the rubric asks whether the model produced a specific human tension or a demographic observation. A score of 4 means the insight is solid and specific but requires light editing. A score of 3 means it identifies the right category territory without landing on the specific human truth within it. That distinction, between an observation and a tension, is the kind of thing a senior creative practitioner recognises immediately and a structural analysis misses.

On the image task: GPT-5.5 is not itself an image model, but it can use ChatGPT's integrated image generation tool, which we used as any creative professional would and scored accordingly. In our session, the generated file metadata referenced DALL-E, so we are treating this as a ChatGPT image-generation pipeline rather than a native GPT-5.5 output, and we have noted that where it is relevant to production decisions. Video generation is not currently available in GPT-5.5 on ChatGPT Pro; the model produced written production treatments instead, which turned out to be more useful than a flat refusal.

What we found across the text tasks

The overall text task average was 3.6 out of 5, with no task scoring below 3 and none scoring above 4. The consistency of that range is itself a finding: GPT-5.5 Thinking delivered capable work across every creative task type and fell short of exceptional on all of them, producing nothing embarrassing and nothing unexpected.

The strongest task was copy variation. We asked for five genuinely distinct executions of a brief for a fictional UK rental deposit guarantee company called Clearday, and the model produced five executions that were meaningfully different in emotional register, structure, and the audience mindset each addressed. One execution, framed around the idea of a flat having a "viewing personality" versus an "exit personality," arrived from a direction the brief did not imply; the brief explicitly asked for one sideways angle, and the model found it.

Three tasks scored 3, all for the same underlying reason. On the consumer insight task, the model identified the right emotional territory for a walking brand but produced an observation rather than a tension, describing how walkers feel without naming what that feeling is in conflict with. On the brief-to-pitch task, it built a well-structured argument while missing the defining characteristic of the audience it was written for: a Marketing Director described explicitly as sceptical of consultants, whose scepticism the pitch read as though it did not exist. On the disrupted project planning task, it kept the most strategically important creative deliverable in the revised scope but offered no argument for why it mattered, simply retaining it without defending it. Each of those three tasks required the model to read something into the brief that was not stated on the surface, and in each case it read the surface and stopped there.

The scriptwriting tasks, both scoring 4, showed the clearest evidence of improved sentence-level quality. The 60-second brand film for a fictional coffee brand called Graft had to weave a specific founding story into the narrative rather than state it as a fact at the end; the model handled it, and the one line of dialogue in the entire script ("Fair enough. Retirement.") did exactly what it needed to do and nothing else. The three-minute explainer for a freelance income product navigated five explicitly banned words, a condescension-averse audience, and a genuinely complex product mechanic, and came out as something a director could shoot from with light editing.

The image task: what it means in practice

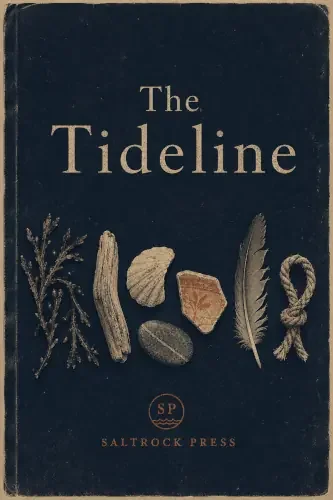

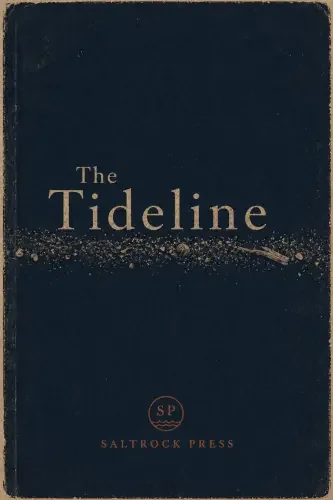

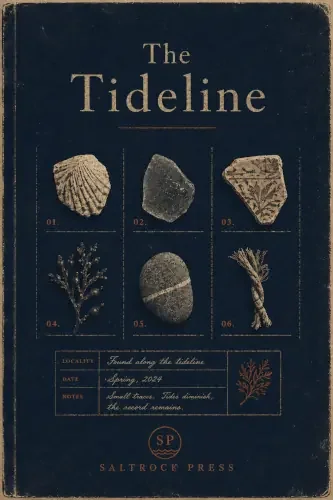

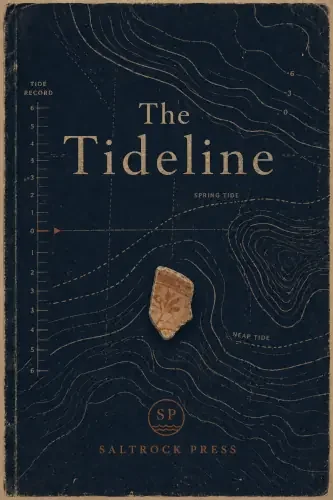

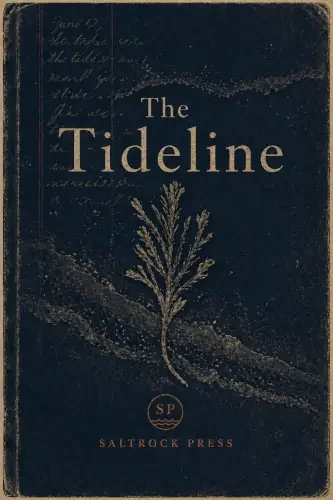

We asked for five cover variations for a fictional Cornish independent publisher called Saltrock Press, with a fixed colour palette and four visual elements explicitly prohibited: no seagulls, no lighthouses, no breaking waves, no generic coastal tourism imagery. The model produced five covers that held the palette across all of them, respected every prohibited element, and found five genuinely different conceptual approaches to the subject.

The standout was a museum specimen catalogue format: six beach-found objects numbered in a grid, with a record card at the bottom reading "Locality: Found along the tideline. Notes: Small traces. Tides diminish, the record remains." That text was not in the brief, and it reframed the book's subject in a way that would work on the back cover.

The practical limitation is immediate. The covers arrived as flattened image files, meaning a designer receiving them could not separate the typography from the texture layer, recolour the publisher mark, or move any element without destructive editing. They work as creative references and not as production assets, which is a distinction that matters for any creative team thinking about where this capability sits in their workflow.

Video: not available, and the workaround

GPT-5.5 Thinking does not currently generate video in ChatGPT Pro, and the model said so clearly before producing written production treatments in response to the brief instead. Those treatments were worth reading: the directorial note for the urgent variation, which called for the feeling of what is at stake when community spaces do not exist, described the approach as "show absence before solution, using stillness and negative space rather than bleak imagery," which is a precise instruction a filmmaker could work from. The use case is pre-production, not generation.

What the broader practitioner record adds

We ran Perplexity Deep Research on practitioner discourse from the launch window alongside the benchmark, and the findings from that research are worth distinguishing clearly from what our seven tasks showed, because they come from a different source.

What our benchmark found: GPT-5.5 Thinking handles tightly specified creative tasks well at short-to-medium length, peaks on structured variation work, and reads the surface of a brief without always reading what is underneath it. What the broader practitioner record adds: independent testing published in the same launch window, including comparative analyses from Zapier, RoboRhythms, and practitioners running marketing copy tests, found GPT-5.5 stronger on ad copy variants and rapid short-form iteration, with Claude performing more consistently on complex multi-constraint briefs and brand voice work over time. Voice consistency appears to degrade across sessions in GPT-5.5, which matters more for teams managing ongoing brand content than for one-off executions. These are findings from other practitioners' tests on other prompts, not from our seven tasks, and they should be weighted accordingly.

The pattern that emerged from those accounts is less "choose one model" and more "know what each one does well," and our benchmark points in the same direction: GPT-5.5 showed strength on structured variation work; our Opus 4.7 run showed different strengths on strategic reasoning and brief fidelity under pressure. Practitioner newsletters published the same week described writers who had spent months on Claude reopening ChatGPT for its image and short-form capabilities while keeping Claude for deeper work. One independent spending dataset has suggested heavy overlap in enterprise AI adoption, with 79% of OpenAI customers also paying for Anthropic, though that is a snapshot of one customer base rather than the whole market. Most serious teams are already running both; the question is whether they are doing it deliberately or by accident.

Where to use it, where to be careful

Based on the benchmark tasks and the practitioner research, here is a practical steer for creative teams.

Reach for GPT-5.5 for: first-pass copy routes and headline families, variation generation where volume and range matter, explainer and product script drafts, production brief writing and pre-production language, rapid iteration on short-form formats.

Apply human judgment before using it for: strategic brand thinking where the underlying tension matters, pitches where audience scepticism is a defining factor, brand voice governance across a content catalogue, anything where the reasoning behind a creative decision needs to hold up in a room.

Always check before it leaves your hands: image files for editability before passing to a designer, any factual or strategic claim the model has not sourced, and creative recommendations the model has retained without explaining why.

What this means in practice

The useful question is not whether GPT-5.5 is better or worse than another model. It is whether the task in front of you needs range, consistency, judgment, production control, or speed, and which tool's strengths match that moment. These are our observations from one structured run. Run your own tests on your own briefs.

Want to know how your team is set up for this?

SIGNAL, KINTAL's AI readiness diagnostic, includes a tools and technology assessment that looks at how creative teams are currently using AI, where the gaps are, and where the workflow design needs attention. Find out more about SIGNAL here.

A note on how this was made: the benchmark was run by Tina Saul using GPT-5.5 Thinking in ChatGPT Pro, Auto-switch off, on 26 April 2026. Initial scoring was conducted by Claude Sonnet 4.6 against the KINTAL rubric, with Tina challenging scores in real time. Perplexity Deep Research provided the practitioner discourse analysis and all images were generated using ChatGPT's integrated image tool. The framing, the editorial judgement, the rubric definition and test framework, and all significant decisions were human led.